The Cambridge Analytica revelations are complicated. As story after story has surfaced from outlets including the Guardian, Channel 4 News and The New York Times it’s been tricky to decipher what’s going on – and more crucially, what it all means for our privacy and democracy.

Speaking jointly to The Observer and The New York Times, whistleblower Christopher Wylie claimed the analytics company had illegally harvested the data of 50 million Facebook users, using it to build powerful software to predict and influence choices in elections, something which has big implications for our rights. However, the story doesn’t end there.

What’s The Story Here Then?

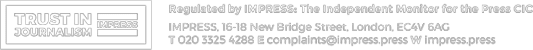

Image Credit: Frontline Club / Twitter

Image Credit: Frontline Club / Twitter

Following the revelations from Mr Wylie, Channel 4 News aired undercover footage appearing to show senior executives from Cambridge Analytica saying they could entrap politicians in compromising situations, as well as claiming they had run “all” of Donald Trump’s digital campaign.

There are also question marks about whether the group worked with pro-Brexit group Leave.EU in the run-up to the European Union referendum. While CEO Alexander Nix told a Select Committee they did not work with the group, this contradicts an earlier column he wrote, as well as statements from Leave.EU founder Aaron Banks.

Mr Nix’s recent comments secretly recorded by Channel 4 and other allegations do not represent the values or operations of the firm and his suspension reflects the seriousness with which we view this violation.

Cambridge Analytica Board of Directors

The Information Commissioner, who protects our information rights, is currently seeking a warrant to search Cambridge Analytica’s London offices.

The company has suspended its CEO with immediate effect, saying Mr Nix’s undercover comments “do not represent the values or operations of the firm”. The company also published a timeline of events, showing when they were made aware that the Facebook data they had obtained from another company had possibly been obtained illegally, and when they deleted it. Cambridge Analytica denies any wrongdoing.

Facebook’s CEO, Mark Zuckerberg, has said they have made “mistakes” and will be changing the way they share data with third-party apps.

But what does this all mean in reality? And what does it mean for our rights?

‘Technology is Disrupting Democracy’

Image Credit: Markus Spiske / Unsplash

Image Credit: Markus Spiske / Unsplash

Speaking this week at the prestigious Hugh Cudlipp lecture, former BBC Director of News, James Harding, told audiences bluntly: “Technology is disrupting democracy. Whether it destroys it is up to us.”

“Mark Zuckerberg’s instruction to move fast and break things was once cool,” he continued. “Less so when it’s democracy […] What’s at stake is a system we can all too easily take for granted. Cambridge Analytica looks like a case study in the abuse of data for political ends.”

There are some serious human rights implications here. A healthy and functioning democracy is one of the cornerstones of our rights, something backed up by Article 3 of the First Protocol of the Human Rights Convention, which mandates there must be “free elections at reasonable intervals by secret ballot, under conditions which will ensure the free expression of the opinion of the people in the choice of the legislature.”

Technology is disrupting democracy. Whether it destroys it is up to us. What’s at stake is a system we can all too easily take for granted.

James Harding

Similarly, privacy is a big consideration in human rights law. We all have a right to a private and family life, protected by the Human Rights Act, and in practice this also covers our correspondence and data. The ins-and-outs of the Cambridge Analytica story are complicated, but one thing is clear – digital technology can simultaneously have a massive effect on public life, and on that of the individual citizen.

A Shifting Digital Landscape

Mark Zuckerberg of Facebook. Image Credit: JD Lasica / Flickr

The investigations surrounding both Cambridge Analytica and Facebook are likely to continue for some time, so what can we do to safeguard our data – and our democracy – in the meantime? With technology moving at break-neck speed, it’s proving an increasing challenge to regulate these structures and protect people’s rights.

Both the Council of Europe and the UN have previously made it clear that all of the same rights apply online as well as offline. The Human Rights Court has made judgments surrounding “big data”, stressing that secret surveillance must be “strictly necessary for safeguarding democratic institutions” and that “there must be adequate and effective guarantees against abuse.”

At the individual level, in the case of S and Marper v the United Kingdom, the court emphasised that “[t]he protection of personal data is of fundamental importance to a person’s enjoyment of his or her right to respect for private and family life.”

The EU Charter also provides additional protections for how our personal data is used – although there are still question marks about what will happen to this once we leave the European Union in 2019. Similarly, the Data Protection Act 1998 and the incoming General Data Protection Register (GDPR) help to ensure that our data isn’t used in damaging ways.

Some people have been quick to blame technology itself, and #deleteFacebook has been trending. Yet social media isn’t inherently bad. It’s important to remember how digital technologies have expanded freedom of expression, and helped some of the most vulnerable in society. The Tech for Good programme is just one example of tech funding for projects that help to empower disabled people, those who are homeless, and women and girls.

“Despite what they say, technology is not neutral,” said James Harding in his lecture. “It’s engineered by people and produced by companies.” Technology is no more good or bad than people themselves – so it’s up to us to find the best solutions and regulations as the digital world evolves.