Police should only use live facial recognition (LFR) software if it can be proved it does not create gender or racial bias in operations, an ethics watchdog has warned.

The London Policing Ethics Panel has today (May 29) released its final report after a review of the Metropolitan Police’s trials of LFR technology since 2016.

“We have come to the view that there are important ethical issues to be addressed but these do not amount to reasons not to use LFR at all,” the report reads.

But it continues: “If there are significant legitimate policing benefits to be gained from LFR these should nevertheless not be gained at the expense of valued liberties.”

The report comes amid the UK’s first High Court battle between South Wales Police and campaigner Ed Bridges, represented by human rights group Liberty, over the use of LFR.

What Is Unique About Live Facial Recognition Technology?

Image Credit: Pixabay.

LFR technology maps people’s facial features and body measurements which it then compares with biometric data on police databases and watch lists in realtime.

Its use has more potentially far reaching implications than CCTV because the process of identifying and tracking individuals is largely automated.

And, unlike automated number plate recognition (ANPR) cameras which are used to track cars, it identifies people based on a more or less permanent identifying characteristic – namely their face.

Questions have been raised about the technology’s efficacy.

A Freedom of Information request, published by civil liberties campaign group Big Brother Watch last month revealed that 96 percent of facial recognition matches misidentified innocent members of the public when the Metropolitan police deployed the same technology eight times between 2016 and 2018.

Liberty argues that there is no legal framework governing the use of facial recognition technology and that evidence shows it discriminates against women and people of black and minority ethnic backgrounds

What Ethical Conditions Have Been Placed On LFR Use?

The London Policing Ethics Panel’s report approved the use of LFR provided the following conditions are met:

- The overall benefits to public safety must be great enough to outweigh any potential public distrust in the technology

- It can be evidenced that using the technology will not generate gender or racial bias in policing operations

- Each deployment must be assessed and authorised to ensure that it is both necessary and proportionate for a specific policing purpose

- Operators are trained to understand the risks associated with use of the software and understand they are accountable

- Both the MPS and the Mayor’s Office for Policing and Crime develop strict guidelines to ensure that deployments balance the benefits of this technology with the potential intrusion on the public

What About Human Rights?

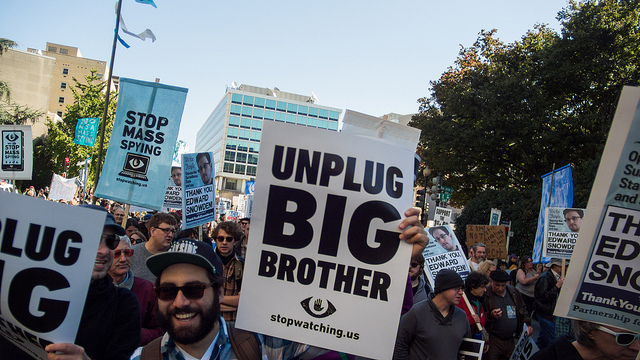

Image Credit: Ella/Flickr

Liberty have said that the use of facial recognition technology breaches the right to privacy and freedom of expression – Articles 8 and 10 of the Human Rights Convention.

Lawyer Megan Goulding said: “Facial recognition is an inherently intrusive technology that breaches our privacy rights.

“It risks fundamentally altering our public spaces, forcing us to monitor where we go and who with, seriously undermining our freedom of expression.

“Today’s report rightly recognises a number of these concerns.

“It is now for police and parliamentarians to face up to the facts: facial recognition represents an inherent risk to our rights, and has no place on our streets.”

Academics from the University of East Anglia and Monash University, Australia, have also called for urgent regulation of LFR – saying it exists in a “legal vacuum”.

What Does The Metropolitan Police Say?

Det Ch Supt Ivan Balhatchet, who has led the MPS’s LFR trials, said: “We welcome the report published by the Ethics Panel which took evidence from a sample of Londoners and highlights important views on the use of this technology.

He added: “We want the public to have trust and confidence in the way we operate as a police service and we take the report’s findings seriously.

“The MPS will carefully consider the contents of the report before coming to any decision on the future use of this technology.”